Automating Google Lighthouse audits and uploading results to Azure

This article covers configuring Lighthouse CI to run against a website and uploading the results to a Lighthouse CI server Docker container running both locally and in Azure.

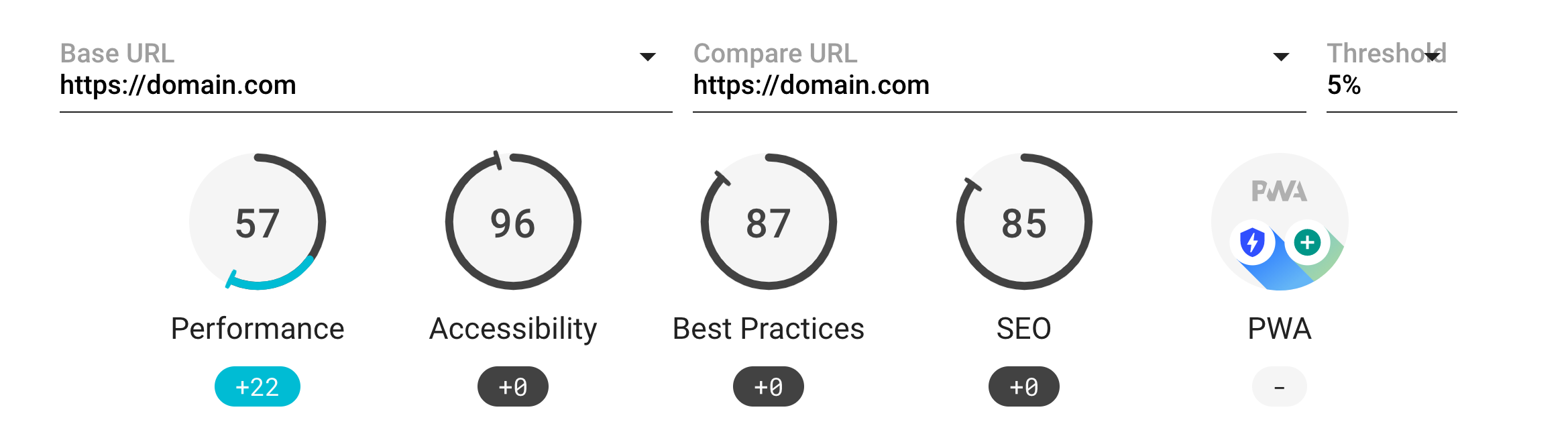

Lighthouse is a great tool for auditing your website on performance, web accessibility, PWA, SEO and more. By automating Lighthouse to run nightly or with each commit and by uploading the results to a Lighthouse CI server, you can track your scores over time, see trends and detect when recent changes have had a negative impact.

Configuring Lighthouse CI to audit your site

Lighthouse CI is designed to have it’s configuration files exist within your project’s Git repo, so when it runs, it can include the branch name and last commit details with each report. This is especially useful with reports uploaded to a Lighthouse CI server, as you can compare results for different commits to help track down when scores have changed.

Note: If you don’t use Git, Lighthouse CI can be configured for use without a Git repo.

In this article, I’m going to create a new Git repo for an empty project but if you have an existing project you want to audit, you can skip this first step.

Create a folder for the empty project and initialise a Git repo

mkdir my-website

cd my-website

git init --initial-branch=mainCreate the folder lighthouse for the configuration files.

mkdir lighthouse

cd lighthouseCreate a new package.json.

npm init -yInstall @lhci/cli.

npm install --save-dev @lhci/cliCreate the configuration file lighthouserc.js with the following settings:

module.exports = {

ci: {

collect: {

url: [

'https://keepinguptodate.com/code-snippets/'

],

numberOfRuns: 1, // Set low to speed up the test runs. Default is 3.

headful: true, // Show the browser which is helpful when checking the config

settings: {

disableStorageReset: true, // Don't clear localStorage / IndexedDB / etc before loading the page

preset: 'desktop'

}

},

upload: {

target: 'temporary-public-storage'

}

}

};The url property should contain an array of URLs for the pages on your website that you want to audit.

The numberOfRuns has been set to 1 to speed up the runs but typically should be set to 3 or more. Lighthouse will use the median result which then accounts for some volatility in the results.

The upload target of temporary-public-storage means once run, Lighthouse CI will upload the results to a public temporary URL and output the URL in the console. Later, we’ll setup up a Lighthouse CI server to upload our results to.

If you created a new Git repo, we need to make an initial commit for Lighthouse to pickup when it runs.

git commit -m "Initial commit"Now we can run Lighthouse CI

npx lhci autorunAs the setting headful is true, Lighthouse CI will launch a visible Chrome instance as it runs. When Lighthouse CI has finished, the URL of the report is logged to the console.

Running Lighthouse 1 time(s) on https://keepinguptodate.com/code-snippets/

Run #1...done.

Done running Lighthouse!

Uploading median LHR of https://keepinguptodate.com/code-snippets/...success!

Open the report at https://storage.googleapis.com/xxx/reports/yyy.report.htmlThe report can be viewed via the URL logged to the console. Alternatively, the report is available in the .lighthouseci folder created by Lighthouse. Note: This folder is cleared each time Lighthouse CI is run.

Authenticating before running Lighthouse audits

If your website has pages that are only available to authenticated users, a puppeteer script can be created to control Chrome (e.g. enter an email address and password and click a login button) before the Lighthouse audit is run.

Given the following login form:

<form action="#" method="post">

<h1>Login</h1>

<label for="email">Email</label>

<input id="email" name="email" autocomplete="username" type="email" required>

<label for="current-password">Password</label>

<input id="current-password" name="current-password" autocomplete="current-password"

type="password" minlength="8" required>

<button value="login">Login</button>

</form>This puppeteer script will enter an email address & password and click the login button.

const EMAIL = 'user@domain.com' // Could use process.env.EMAIL for env var

const PASSWORD = 'xxx' // Could use process.env.PASSWORD for env var

/*

* @param {import('puppeteer').Browser} browser

* @param { {url: string, options: LHCI.CollectCommand.Options} } context

*/

module.exports = async (browser, { url }) => {

const page = await browser.newPage();

await page.setViewport({ width: 1024, height: 600 });

await page.goto(url);

// This login script is run for every URL so check if the user is already authenticated and if so then

// return early without doing anything.

const userDropdownElement = await page.$('#userDropdown');

if (userDropdownElement !== null) {

await page.close();

return;

}

// Enter the username and password and login

const emailInput = await page.$('#email');

await emailInput.type(EMAIL);

const passwordInput = await page.$('#current-password');

await passwordInput.type(PASSWORD);

const loginButton = await page.$('button[value=login]');

await loginButton.click();

await page.waitForNavigation({ waitUntil: 'networkidle2' }) // No more then 2 network requests for half a second

await page.close();

};To use this script saved with the filename login-script.js, first we’ll need to install puppeteer.

npm install puppeteer --save-devAnd then update the Lighthouse CI configuration file lighthouserc.js to run this puppeteer script before each URL is audited.

module.exports = {

ci: {

collect: {

...

puppeteerScript: 'login-script.js', // Ensure there's an authenticated user before running Lighthouse

puppeteerLaunchOptions: {

defaultViewport: null

},

...

}

}

};Uploading results to a local Lighthouse CI server

We’re going to run the Lighthouse CI server in a Docker container so first ensure you have Docker Desktop. installed and running. The Lighthouse CI server image is available on Docker Hub.

Download the latest version of the Docker image.

docker pull patrickhulce/lhci-serverCreate a Docker volume to store files that should be persisted. The Docker image uses SQLite for it’s database, which uses files stored on the filesystem, so using a volume ensures results are not lost when the container restarts.

docker volume create lhci-dataNow we can start an instance of the Lighthouse CI server on port 9001.

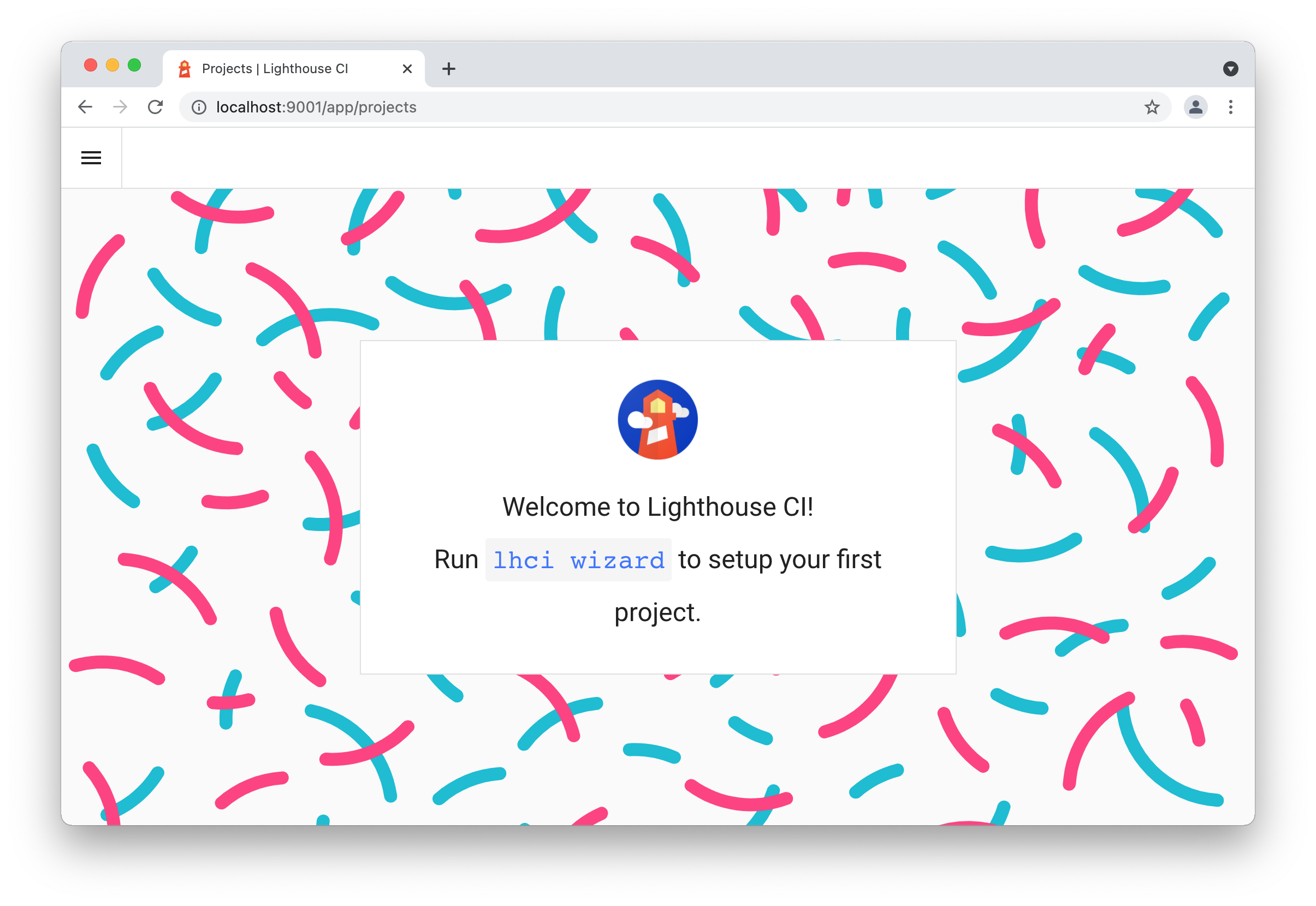

docker container run --publish 9001:9001 --mount source=lhci-data,target=/data --detach patrickhulce/lhci-serverOnce the instance has started, navigate to http://localhost:9001/. where you should see the dashboard welcome page.

As detailed on the welcome page, we now need to create a project for our Lighthouse results to be uploaded to. We can use the Lighthouse CI wizard to walk us through these steps.

npx lhci wizardChoose the option new-project, enter the server URL of http://localhost:9001/ and give the project a suitable name. The Lighthouse CI server will then output a build token and admin token. Keep these tokens safe as they will be needed later.

If you navigate to the Lighthouse CI server on http://localhost:9001/, you will now see the project listed.

With our Lighthouse CI server running and having created a project, we now need to update the lighthouserc.js configuration file so when we run Lighthouse CI, the results are uploaded to our server. Update the upload section as per below:

module.exports = {

...

upload: {

target: 'lhci',

serverBaseUrl: 'http://localhost:9001',

token: 'xxx' // The Lighthouse CI server build token for the project

}

...

};And with that, when Lighthouse CI is next run, the results will be uploaded to the server.

npx lhci autorunSaved LHR to http://localhost:9001

Done saving build results to Lighthouse CI

View build diff at http://localhost:9001/app/projects/mywebsite/compare/xxxNavigate to http://localhost:9001/ and the project to see the results.

Note: If you receive the error Unexpected status code 422, Build already exists for hash while performing test runs of Lighthouse CI, you can create an empty commit to bypass this.

git commit --allow-empty -m "Lighthouse run"Running a Lighthouse CI server in Azure

This part of the article covers running the Lighthouse CI server Docker image in Azure. As well as creating a web app to host the Docker image, we’ll also create a PostgreSQL database to store all the results.

Unfortunately, storage account file shares used with containers don’t support SQLite databases, so for this reason, we’re using a PostgreSQL database.

From Azure Portal, create a new resource of type “Azure Database for PostgreSQL”. Follow the wizard and select the compute and storage you require.

I chose the plan Single server & storage + compute of Basic 1 vCores, 5 GB storage.

When prompted, enter a username and password for the PostgreSQL database.

Once the PostgreSQL database has been created, access the “Connection security” blade and enable Allow access to Azure services and disable Enforce SSL connection.

We’re ready to create the web app for the Lighthouse CI server.

Create a new resource of type “Web App for Container”. Choose the operating system Linux, and the sku and size of F1, which is free. From the “Docker” tab, select an image source of Docker Hub and for the image and tag, enter patrickhulce/lhci-server. With those options in place, create the web app.

Once the web app has been created, we then need to configure it to connect to the PostgreSQL database.

From the web app, access the “Configuration” blade and add the setting LHCI_STORAGE__SQL_CONNECTION_URL with the connection details in the following format.

postgresql://{user}%40{server}:{pass}@{server}.postgres.database.azure.com:5432/postgresSo for example.

user: bob

password: qwerty

server: mypostgres

postgresql://bob%40mypostgres:qwerty@mypostgres.postgres.database.azure.com:5432/postgresAdditionally, add the setting LHCI_STORAGE__SQL_DIALECT with the value postgres.

As the Lighthouse CI server in the container is listening on port 9001, we need to configure the Azure web app to use this port, so add the application setting WEBSITES_PORT with the value 9001.

And with that, you should now be able to access the web app URL and see the Lighthouse CI server welcome page. From here, follow the same steps as earlier for configuring a local Lighthouse CI server but substitute the http://localhost:9001 server URL for the URL of the Azure web app.

Additional options for running Lighthouse CI

Lighthouse CI server also supports MySQL databases.

The server

configuration settings to use a different database are documented here.

The format for the application settings in Azure should be all uppercase, dots replaced with 2 underscores, words separated by 1 underscore and settings prefixed with LHCI_.

For example:

storage.sqlConnectionUrl => LHCI_STORAGE__SQL_CONNECTION_URLMore information on environment variables naming is documented here

Additional reading

Lighthouse CI server containers can also be run in Google Cloud Kubernetes.